In 2026, Apache Kafka has evolved into a highly streamlined, "ZooKeeper-less" event streaming platform. While its core purpose remains the same—handling real-time data at massive scale—the feature set in Kafka 4.0+ has introduced significant architectural improvements.

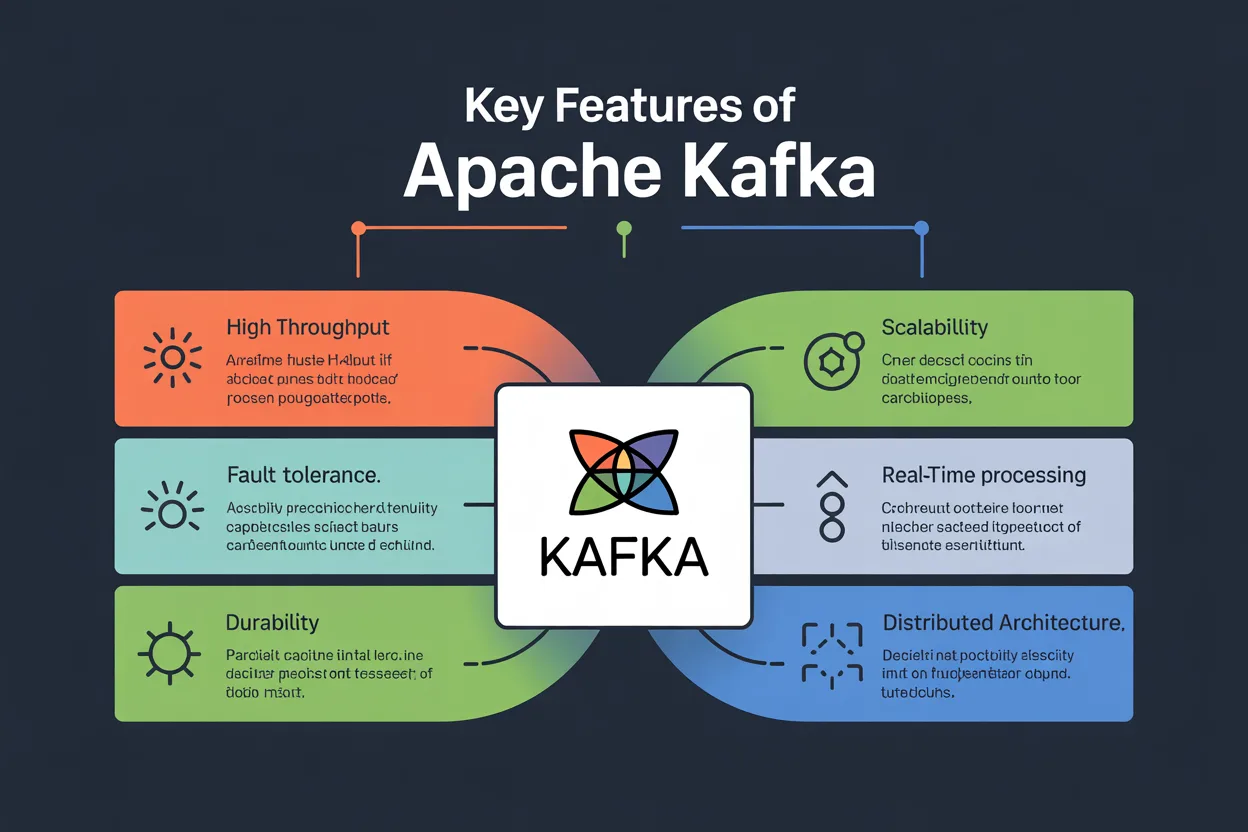

The key features of Apache Kafka can be divided into its core architectural pillars and the modern 2026 advancements.

1. Core Architectural Pillars

These fundamental features have made Kafka the industry standard for over a decade:

- High Throughput & Low Latency: Kafka can process millions of messages per second with latencies as low as 2ms. It achieves this by using a sequential "append-only" log and leveraging the operating system's page cache.

- Massive Scalability: Kafka clusters can scale up to thousands of brokers. By using Partitions, data is distributed across multiple servers, allowing for parallel processing that can handle trillions of messages daily.

- Permanent & Durable Storage: Unlike traditional message queues that delete data once consumed, Kafka stores data on disc. You can configure retention for a few hours, several years, or indefinitely.

- Exactly-Once Semantics (EOS): Kafka ensures that data is processed exactly once—even if a producer retries a send or a server fails. This is critical for financial transactions and accurate analytics.

2. Modern 2026 Advancements (Kafka 4.0+)

As of 2026, the following features define the "Modern Kafka" experience:

KRaft Mode (ZooKeeper-less)

The biggest change in recent years is the complete removal of Apache ZooKeeper.

- Self-Managed Metadata: Kafka now manages its own metadata via the KRaft consensus protocol.

- Faster Recovery: Controller failover times have dropped from seconds to milliseconds.

- Simplicity: You no longer need to manage a separate ZooKeeper cluster, reducing operational overhead.

Tiered Storage (KIP-405)

Tiered storage decouples Compute from Storage, allowing for virtually unlimited data retention.

- Hot Tier: Recent data is kept on fast, local SSDs for low-latency real-time processing.

- Cold Tier: Older data is automatically offloaded to cheap object storage like Amazon S3 or Google Cloud Storage.

- Cost Efficiency: You can store years of data in Kafka without needing to add expensive broker nodes.

Share Groups (Queues for Kafka)

Traditionally, Kafka followed a strict "one partition, one consumer" rule. Share Groups (KIP-932) introduce elastic, queue-like scaling.

- Cooperative Consumption: Multiple consumers can now pull from the same partition simultaneously.

- Elastic Scaling: If you have a massive spike in data, you can simply add more consumers to a single partition to handle the load, similar to a traditional task queue like RabbitMQ.

3. Comparison of Message Guarantees

Kafka allows you to choose the "strictness" of your data delivery based on your application's needs:

FeatureDescriptionUse CaseAt-Most-OnceMessages may be lost but never redelivered.Non-critical log telemetry.At-Least-OnceMessages are never lost but may be duplicated.Standard website tracking.Exactly-OnceEach message is processed once and only once.Payment processing, billing.4. The Ecosystem Tools

Kafka's utility is extended by three powerful built-in components:

- Kafka Connect: A no-code framework to "sink" Apache Kafka Course data into databases (Snowflake, MongoDB) or "source" it from them.

Kafka Streams: A lightweight library for real-time transformations (joins, filters, aggregations) within your Java/Kotlin apps.