In today's fast-paced digital landscape, cloud infrastructure forms the backbone of modern businesses. It enables scalable computing, storage, and networking without the burdens of on-premises hardware. Yet, as demands for AI, machine learning, and high-performance computing (HPC) surge, traditional setups fall short. Enter GPU as a Service (GPUaaS), a game-changing extension of cloud infrastructure that delivers powerful graphics processing units on demand. This combination empowers organizations to handle complex workloads efficiently, cutting costs and accelerating innovation.

The Core of Modern Cloud Infrastructure

Cloud infrastructure refers to the virtualized pool of resources—servers, storage, and networks—provided over the internet. It offers elasticity, allowing businesses to scale resources up or down based on needs. Pay-as-you-go models minimize upfront investments, making it ideal for startups and enterprises alike.

However, not all workloads suit standard CPU-based cloud infrastructure. Data-intensive tasks like training neural networks, rendering 3D graphics, or simulating scientific models require parallel processing power. CPUs process tasks sequentially, leading to bottlenecks. This is where GPUs shine. Designed for massive parallelism, GPUs perform thousands of operations simultaneously, speeding up computations by orders of magnitude.

What Is GPU as a Service?

GPU as a Service integrates high-end GPUs into cloud infrastructure, letting users access them remotely without buying expensive hardware. Instead of purchasing NVIDIA A100s or AMD Instinct accelerators—which can cost tens of thousands—you rent them by the hour or instance.

Key features include:

- On-demand provisioning: Spin up GPU instances in minutes via APIs or dashboards.

- Scalability: Auto-scale clusters for bursty workloads, like peak AI training sessions.

- Global accessibility: Deploy across data centers worldwide for low-latency edge computing.

- Managed services: Providers handle maintenance, cooling, and security updates.

For example, a healthcare firm analyzing medical images can provision a GPU cluster in cloud infrastructure to process petabytes of data overnight, rather than waiting weeks on local machines.

Key Benefits for Businesses

Adopting GPU as a Service within cloud infrastructure delivers tangible advantages.

First, cost efficiency. Capital expenses drop dramatically; you pay only for active usage. Idle GPUs don't drain budgets, unlike owned hardware depreciating in racks.

Second, accelerated performance. GPUs excel in matrix multiplications central to deep learning. A task taking days on CPUs might finish in hours on GPUs, slashing time-to-insight.

Third, enhanced collaboration. Teams access shared cloud infrastructure from anywhere, fostering remote work. Version control integrates seamlessly with tools like Git, streamlining ML pipelines.

Consider e-commerce: During product launches, GPU as a Service powers real-time recommendation engines. It processes user behavior data instantly, boosting conversion rates by personalizing suggestions.

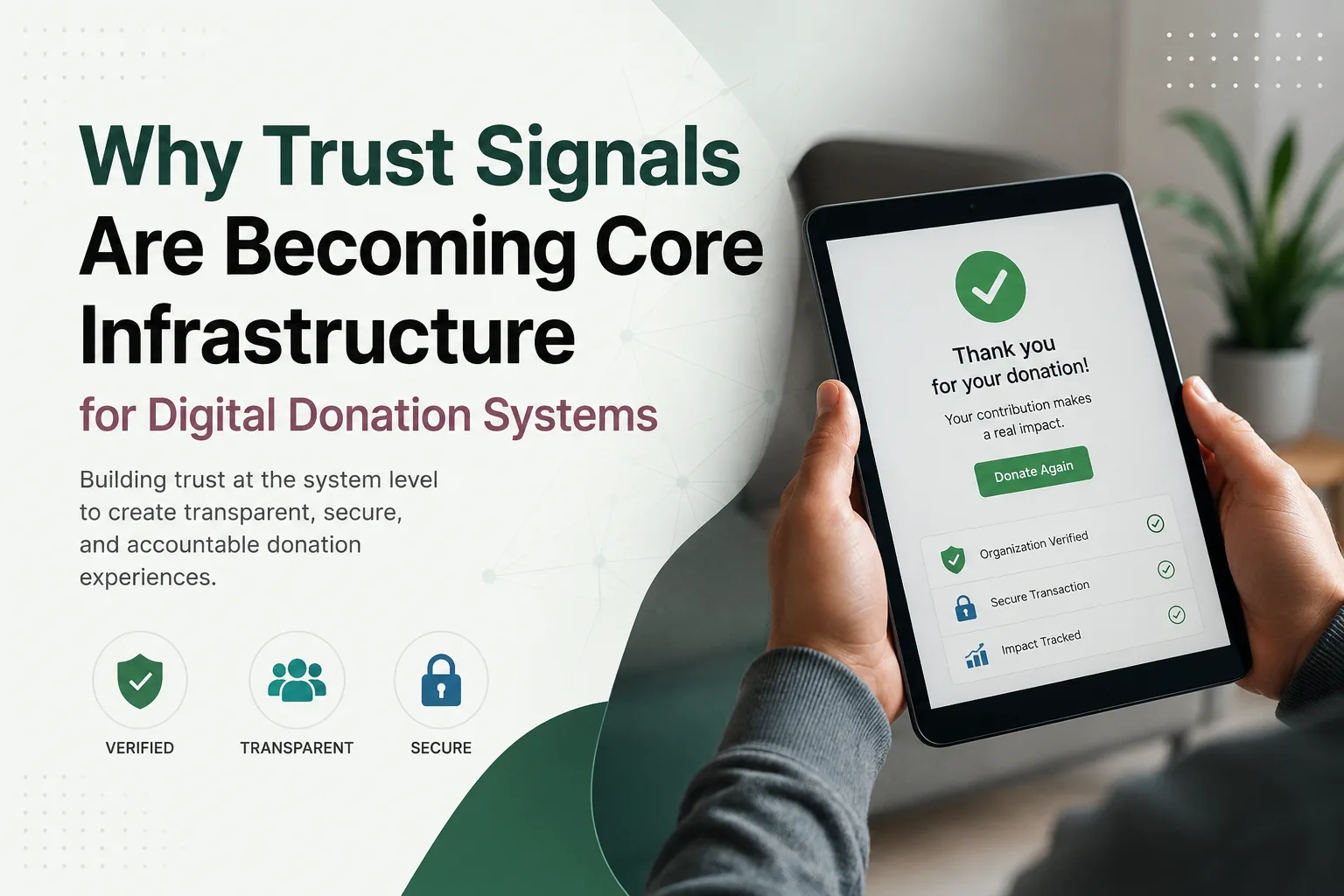

Security is another pillar. Modern cloud infrastructure with GPU as a Service includes encryption, compliance certifications (like SOC 2 and GDPR), and isolated virtual environments, protecting sensitive data during AI model training.

Real-World Applications Across Industries

GPU as a Service transforms diverse sectors when paired with robust cloud infrastructure.

- AI and Machine Learning: Train large language models or computer vision systems 10-20x faster. Autonomous vehicle developers simulate driving scenarios at scale.

- Media and Entertainment: Render high-definition videos or CGI for films. Streaming platforms transcode content in real-time.

- Finance: Run Monte Carlo simulations for risk analysis or detect fraud patterns via anomaly detection.

- Scientific Research: Climate modelers process vast datasets; drug discovery accelerates molecular simulations.

- Gaming: Cloud gaming services deliver photorealistic graphics without high-end local PCs.

A research institute, for instance, used GPU as a Service in cloud infrastructure to model protein folding, advancing COVID-19 vaccine development months ahead of schedule.

Challenges and Best Practices

While powerful, integrating GPU as a Service isn't without hurdles. Data transfer latency can slow workflows, and optimizing code for GPUs demands expertise (e.g., CUDA or ROCm proficiency).

Mitigate these with:

- Hybrid strategies: Combine CPUs for general tasks and GPUs for intensive ones.

- Spot instances: Use interruptible, low-cost GPUs for non-critical jobs.

- Monitoring tools: Track utilization with dashboards to avoid over-provisioning.

- Frameworks: Leverage TensorFlow, PyTorch, or Rapids for GPU-native development.

Start small: Benchmark workloads on free tiers, then scale. Ensure cloud infrastructure supports multi-GPU setups for distributed training.

The Future of Cloud Infrastructure and GPU as a Service

Looking ahead, GPU as a Service will evolve with cloud infrastructure. Expect integration with edge computing for IoT, quantum-inspired accelerators, and AI-optimized networks reducing bottlenecks.

Sustainability drives innovation too—efficient GPUs lower energy use, aligning with green IT goals. By 2026, analysts predict GPU cloud spending to exceed $10 billion annually, fueled by generative AI demands.

In summary, cloud infrastructure enhanced by GPU as a Service democratizes high-performance computing. It levels the playing field, enabling any organization to tackle ambitious projects without hardware barriers.

Ready to migrate? Assess your workloads today and explore GPU as a Service providers. The shift promises faster innovation and smarter resource use.