In October 2025, AWS's USEAST1 region went dark for 15 hours. Over 4 million users across more than 1,000 companies worldwide have lost access to critical services. Financial transactions are stalled. Airline booking systems went offline. Enterprise workflows froze. One month later, Microsoft Azure followed an 8-hour global disruption triggered by a Front Door DNS misconfiguration that took down Azure and Microsoft 365 simultaneously, generating over 18,000 user reported incidents at its peak. And sandwiched between the two in September 2025 multiple subsea cables running through the Red Sea near Jeddah were severed, forcing Azure to reroute intercontinental traffic through degraded paths, increasing latency and packet loss across AsiaEurope corridors for days.

For Indian enterprises, these weren't distant incidents that played out in someone else's infrastructure. India's digital economy is deeply integrated into global cloud infrastructure. AWS, Azure, and Google Cloud together experienced more than 100 service outages between August 2024 and August 2025, and every major hyperscaler counts Indian enterprises from Bengaluru based SaaS companies to Mumbai fintechs among their most rapidly growing customer bases. The lesson 2025 forced onto every engineering team that relies on a single cloud provider is not subtle: single cloud dependency is a single point of failure, and that failure will eventually arrive.

Multicloud hedging the practice of deliberately distributing workloads and failover capacity across more than one cloud provider has moved from an architectural preference debated in design reviews to a business continuity mandate. This is what that looks like in the specific context of India's data center landscape, what real failover testing reveals about its efficacy, and what the architecture requires to work when a hyperscaler goes dark.

India's Data Center Landscape: The Context for MultiCloud Strategy

India's data center market is undergoing one of the most rapid expansions of any country in the world. India's data center capacity rose from 350 MW in 2019 to 1,030 MW in 2024 and is projected to reach 1,825 MW by 2027. The growth is driven by a confluence of forces: hyperscaler capital expenditure commitments (AWS announced a ₹60,000 crore investment in its Hyderabad region alone in January 2025), data localization mandates under the Digital Personal Data Protection Act, and the explosion of AI workloads requiring highdensity compute infrastructure.

Mumbai stands out as the dominant hub, accounting for 52% of India's total data center capacity, with Chennai ranking second at 21%. Bengaluru, Hyderabad, and Pune form the next tier. This geographic concentration has a direct resilience implication: Indian enterprises running workloads on a single cloud provider's India region are often relying on infrastructure that is geographically clustered within the same metro area meaning a regional network event, a power grid disruption, or a subsea cable cut affecting Mumbai's connectivity can simultaneously affect the hyperscaler's India region and the enterprise's onpremises or colocation presence in that same city.

The Personal Data Protection framework through the Digital Personal Data Protection Act (DPDPA) adds a regulatory dimension that shapes how Indian enterprises can architect multicloud strategies. These regulations mandate localized data storage, enforce 72hour breach disclosures, and impose severe penalties for noncompliance, with fines reaching ₹500 crore under India's DPDP legislation. For multi-cloud architectures, this means data replication across providers must be engineered to keep sensitive personal data within Indian geographic boundaries not simply replicated to the nearest available region, which might be Singapore or Tokyo.

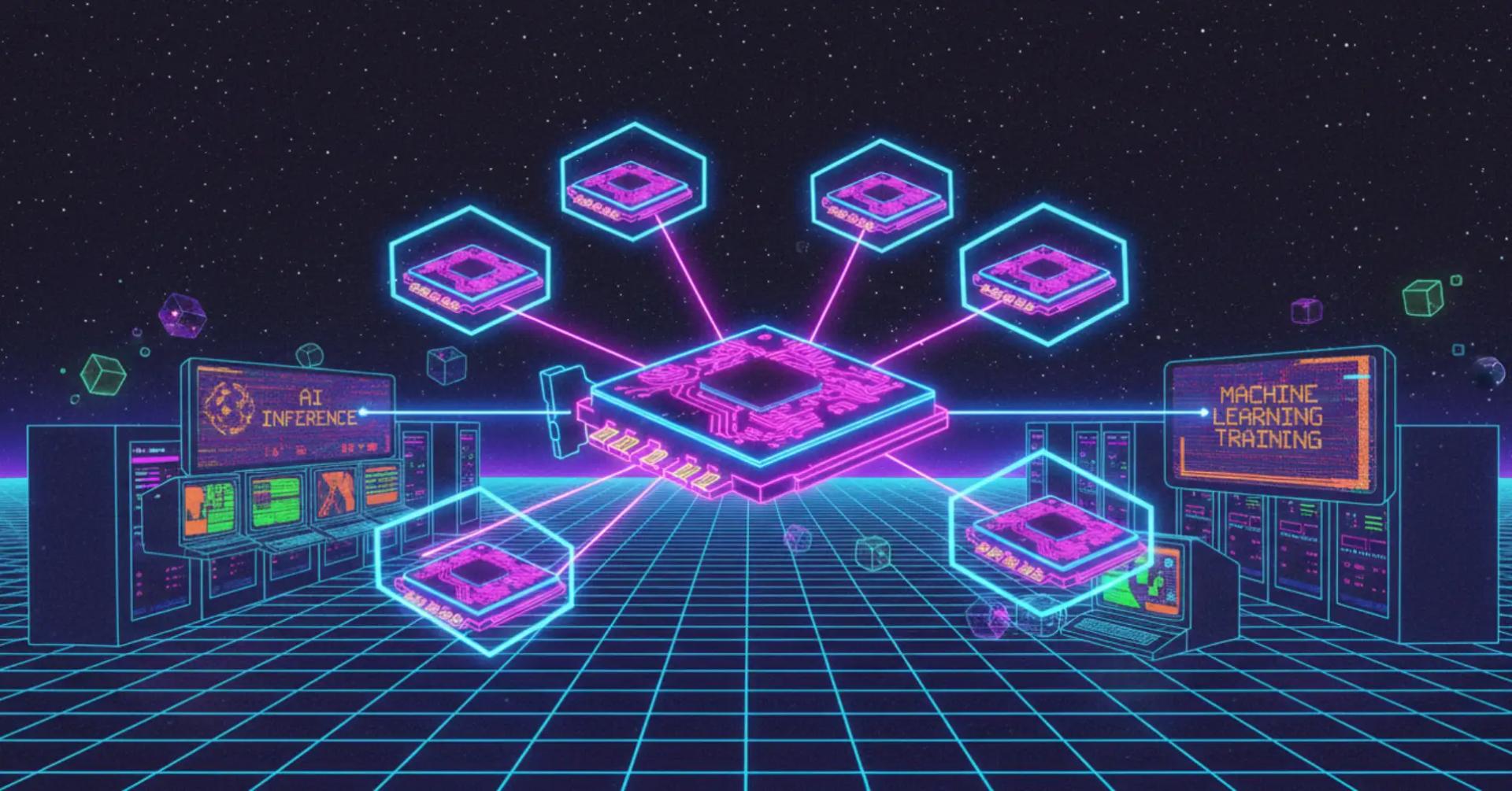

What Multi-Cloud Hedging Actually Means (and What It Doesn't)

There is a common misconception that multi-cloud resilience means running the same application stack twice, on two different providers, and manually switching when one goes down. This is neither the full picture nor how effective hedging works in practice.

Multi-cloud hedging exists on a spectrum of architectural maturity, and the right point on that spectrum depends on workload criticality, RTO/RPO targets, team capability, and budget.

Active passive failover is the most common starting point. The primary workload runs entirely on one provider. A secondary environment pre-provisioned or warm standing exists on a second provider. When the primary fails, traffic is redirected to the secondary. The RPO (Recovery Point Objective) depends on how frequently state is replicated to the secondary; the RTO (Recovery Time Objective) depends on how quickly DNS cutover and application startup on the secondary can complete. Passive secondary environments in Indian DCs typically target RTOs of 15 minutes to 4 hours depending on workload complexity.

Active active deployment runs production workloads simultaneously across two or more providers, with a load balancer distributing traffic between them. Failover is instantaneous if one provider becomes unavailable; the load balancer routes all traffic to the surviving provider without manual intervention. Active active recovery delivers under 1hour RPO/RTO and ensures continuous operations even if a region goes offline. The architectural cost is real: state synchronization between clouds, consistent identity management, cross cloud latency for writes to a primary data store and significantly increased operational complexity. But for revenue critical systems payment gateways, trading platforms, real-time customer facing APIs the cost is often justified.

Selective workload hedging is the most pragmatic middle path: running authentication, payment processing, and public facing APIs across multiple clouds while keeping internal tooling, batch jobs, and data warehousing on a single provider. This approach protects revenue generating services without requiring a full doubling of operational overhead. For Indian fintechs, ecommerce platforms, and SaaS companies, this pattern maps naturally onto the business: the customer checkout flow is mission critical; the internal analytics dashboard can tolerate a few hours of downtime.

Real Failover Testing: What Engineers Actually Discover

The gap between a documented multi-cloud architecture and a functioning multi-cloud failover is where most enterprise resilience strategies quietly fail. The AWS October 2025 outage made this visible at scale: organizations that believed they had multi-cloud resilience discovered, in production, that their secondary environment hadn't been updated in weeks, their DNS TTLs were set to 24 hours instead of 60 seconds, or their database replication had silently fallen behind by hours.

Real failover testing in Indian data center environments reveals several recurring failure patterns.

DNS TTL Misconfiguration Is the Most Common Single Point of Failure

When an outage occurs and traffic needs to redirect from one cloud provider to another, the mechanism is usually a DNS record change. The time it takes for that change to propagate globally and for cached DNS responses to expire is controlled by the Time-to-Live (TTL) value on the DNS record. Organizations that set DNS TTLs to hours or days for "stability" reasons discover during outages that even after their secondary environment is ready to accept traffic, the world's resolvers are still routing requests to the failed provider for hours.

PostOctober 2025 AWS outage analysis highlighted this explicitly: AWS itself noted that switching DNS records alone is insufficient if primary servers are down, and introduced a new Route 53 feature with a 60minute recovery guarantee for DNS control plane operations. If servers are in one AWS region and it goes down, switching DNS records alone is not enough. Indian engineering teams that tested their failover procedures after the outage discovered that many had TTLs in the 3,60086,400 second range making clean failover impossible within any reasonable RTO.

The fix is straightforward but requires advance planning: critical DNS records should have TTLs of 60300 seconds before any anticipated maintenance or degraded health signals, and health check based DNS failover (using Route 53 health checks, Azure Traffic Manager, or Cloudflare Load Balancing) should be configured to automatically reroute traffic when primary endpoints stop responding without requiring human DNS intervention at 3am during an incident.

Data Replication Lag Is the Silent RPO Killer

For workloads with stateful components relational databases, object stores, message queues multi-cloud failover is only as clean as the replication lag between primary and secondary. An organization whose secondary cloud database is synchronized every hour has an RPO of one hour, regardless of what their architecture documentation says. Testing failover in a real scenario not just checking that the secondary environment boots, but actually verifying that the data state is consistent and that the application functions correctly against the secondary database is where most untested resilience strategies collapse.

In Indian enterprise environments, real failover testing typically surfaces two categories of replication problems. The first is replication lag growth under load: the secondary falls further and further behind the primary during peak business hours because the replication bandwidth or compute allocated to the secondary can't keep pace with peak write throughput. The second is schema divergence: application deployments update the primary database schema but fail to apply the same migrations to the secondary, meaning the secondary if activated would be running an older schema version incompatible with the current application code.

Both problems are invisible until failover is actually tested. Organizations that conduct quarterly failover drills in Indian DC environments consistently find and fix these issues before they become incident time surprises. Organizations that only test annual DR scenarios tend to discover them during actual outages.

CrossCloud Networking Is More Complex Than It Appears on Paper

A multi-cloud architecture on a whiteboard draws clean arrows between Provider A and Provider B. In Indian data center environments, implementing those connections reliably requires navigating the specifics of inter provider connectivity.

Direct interconnects between AWS, Azure, and GCP are not native they require either the public internet (with the unpredictability and latency variance that implies) or private interconnection through a colocation provider. Equinix's interconnection hub in Chennai, which connects to three other Equinix data centers in Mumbai, is a key piece of Indian multi-cloud network architecture: enterprises that collocate a network presence at Equinix can establish private cross connects between their cloud providers without traffic traversing the public internet. NTT's Delhi NCR data center and STT GDC's expanding Mumbai and Pune campuses play similar roles for northern and western India connectivity fabrics.

Testing shows that cross cloud network latency between Mumbai-hosted AWS and Mumbai-hosted Azure, routing through private interconnect, typically adds 25ms of roundtrip latency compared to intracloud communication. For synchronous replication where every write must be acknowledged by the secondary before committing the primary this latency overhead directly increases write latency on the primary. For most applications this is acceptable; for ultralow latency trading systems or real-time bidding infrastructure, it requires architectural accommodation, such as asynchronous replication with a defined RPO rather than synchronous replication with a write latency penalty.

Identity and Authentication Are Frequently the Last Thing Tested

Multi-cloud failover tests often focus on compute, storage, and networking and treat identity and authentication as solved problems. They are not. When failover routes traffic from Provider A to Provider B, every service that calls another service using provider native identity mechanisms (AWS IAM roles, Azure Managed Identities, GCP Workload Identity) must have equivalent identity configurations on the secondary provider. Service to service calls that use provider for native credential injection will fail on the secondary if those credentials aren't pre-provisioned and tested.

Hidden dependencies are often underestimated by organizations; the extent of their reliance on third-party providers is frequently greater than assumed. In practice, Indian enterprise teams doing real failover tests regularly find services that were assumed to be provider agnostic but contain hardcoded assumptions about AWS IAM or Azure AD endpoint URLs. Finding these dependencies in a test rather than during a live outage is exactly the return on investment that regular failover testing delivers.

The RBI and SEBI Compliance Dimension

For Indian financial institutions, multi-cloud resilience is not purely a business continuity choice it is increasingly a regulatory expectation. The Reserve Bank of India's Business Continuity Management guidelines and SEBI's operational resilience frameworks for market infrastructure institutions both emphasize the need for documented, tested disaster recovery capabilities. The specific RTO and RPO targets vary by institution type, but the direction of regulatory travel is clear: regulators expect institutions to demonstrate, through actual testing, that they can recover critical services within defined timeframes.

This regulatory context elevates multi-cloud hedging from a technical discussion to a boardroom conversation. When a bank's recovery capability must be evidenced to RBI during an audit, the difference between a documented architecture and a tested failover procedure is the difference between regulatory compliance and a corrective action. Fintechs and NBFCs operating under the Payment Aggregator and Payment Gateway framework face similar expectations around service continuity for payment rails.

The parallel with global financial regulations is instructive. The EU's Digital Operational Resilience Act sets expectations across Europe, demanding that businesses reduce cloud concentration risk and demonstrate that critical data and systems can survive provider level failures. India's regulatory framework is moving in a comparable direction, and Indian financial institutions with global operations increasingly face both domestic and international resilience requirements simultaneously.

Architecting for Real Resilience: The Technical Checklist

What separates an Indian enterprise with genuine multi-cloud failover capability from one with a multi-cloud architecture that would collapse under a real provider outage?

Infrastructure as Code across providers. Terraform and Pulumi support all major cloud providers and enable consistent, version-controlled infrastructure definitions that can be deployed to secondary providers. Enterprises that manage their Indian cloud infrastructure through IaC can spin up a secondary environment faster and more reliably than those relying on manual console provisioning. IaC also ensures that security group configurations, network topology, and application dependencies are consistently applied on the secondary eliminating the drift that develops in manually maintained environments.

Provider agnostic data layer. Databases that use provider native services Amazon RDS, Azure SQL Database, Google Cloud Spanner require provider specific replication mechanisms and introduce vendor lock in into the most critical layer of the application stack. Deploying databases on provider agnostic engines (PostgreSQL, MySQL, Cassandra, Redis) running on VMs or managed Kubernetes clusters makes cross cloud replication tractable using standard database replication protocols. Kafka for event streaming, deployed on MSK on AWS and Confluent on GCP, can serve as the replication backbone that keeps cross cloud data states synchronized within defined RPO windows.

Automated health checks with low-TTL DNS failover. Health check-based DNS failover, using routing policies that automatically demote unhealthy endpoints, should be the default configuration for all customer facing services not an optional enhancement. DNS TTLs for critical records should be set to 60 seconds during normal operations and verified in failover tests to ensure the expected propagation time is actually achieved.

Quarterly chaos engineering on the secondary path. The most effective resilience programs in Indian enterprise environments conduct scheduled chaos engineering exercises where the primary cloud is deliberately made unavailable (by blocking outbound traffic at the network level, simulating credential failures, or disabling primary region endpoints) and the secondary path is required to serve production traffic for a defined period typically 30 minutes to 2 hours. These exercises surface replication lag, schema divergence, identity misconfigurations, and missing dependencies in an environment where finding them has no customer impact.

Where Independent Cloud Infrastructure Fits the Hedging Strategy

An increasingly important architectural decision for Indian enterprises building multi-cloud resilience is whether the secondary or even primary cloud environment should be a hyperscaler or an independent, specialized cloud provider. The October 2025 outage cluster demonstrated that hyperscaler failures can be correlated: AWS and Azure failures in the same quarter affected different enterprise systems, but organizations that relied exclusively on hyperscalers had no independent fallback. 86% of organizations have adopted multi-cloud strategies for resilience, and a growing share of those strategies include at least one nonhyperscaler tier.

Independent cloud infrastructure providers particularly those with dedicated baremetal compute and India based data center presence serve a distinct role in multi-cloud hedging architectures. They are not subject to the same operational dependencies, shared control plane risks, or largescale configuration change processes that caused the October 2025 AWS DynamoDB DNS cascade and the Azure Front Door misconfiguration. A control plane failure that takes down a hyperscaler's DNS resolution will not affect an independent provider's infrastructure because they operate entirely separate control planes.

For Indian enterprises requiring data residency, dedicated compute, and infrastructure independence from hyperscaler level blast radius events, providers like AceCloud which offers high-performance cloud and baremetal infrastructure with Indiabased deployment options provide a meaningful secondary tier that is structurally independent from the AWS/Azure/GCP failure modes that generated 2025's headline outages. This isn't about replacing hyperscalers for the breadth of services they offer; it is about ensuring that the specific failure modes of hyperscaler control planes, shared DNS infrastructure, and centralized networking services cannot simultaneously take down both the primary and secondary tiers of a business critical workload.

The architecture pattern that leading Indian enterprises are converging on: primary production on a hyperscaler (typically AWS or Azure, given their depth of managed services and India region presence), stateful replication and secondary failover on a structurally independent provider with Indian DC presence, and data sovereignty compliance enforced at both tiers. The secondary tier handles failover for the small number of business critical, revenue generating services; the hyperscaler handles the long tail of internal tooling, managed AI services, and batch workloads where a few hours of degradation is acceptable.

The Testing Cadence That Makes Multi-Cloud Resilience Real

Real multi-cloud hedging is not an architecture. It is practice. The architecture enables the practice; the practice determines whether the architecture works when it matters. The Mean Time To Restore (MTTR) averages 80 minutes globally but that average conceals enormous variance between organizations that test regularly and those that discover the reality of their resilience posture during live incidents.

Indian enterprises that have invested in real multi-cloud failover capability and verified it through quarterly testing experienced the October and November 2025 outages as inconveniences rather than crises. Their traffic shifted. Their secondary environments absorbed load. Their monitoring dashboards showed green within minutes. Their customers noticed nothing.

Indian enterprises that had architectures in PowerPoints and documentation in Confluence experienced those same outages as multi-hour incidents with executive escalation, manual handover procedures, and SLA conversations with affected customers. IT downtime cost enterprises an average of $14,056 per minute in 2024 for Indian midmarket companies, even a fraction of that figure makes the investment in genuine multi-cloud failover capability economically straightforward to justify.

The gap is not budget or technology. The gap is testing cadence and the organizational willingness to treat resilience as a continuous practice rather than a onetime architecture decision. In India's rapidly expanding data center ecosystem with new hyperscaler regions, new independent providers, and new regulatory expectations all converging simultaneously the teams that build that practice now will be the ones whose infrastructure survives whatever 2026's equivalent of the October 2025 AWS outage turns out to be.

For teams looking to anchor their secondary failover tier in dedicated, high-performance Indian cloud infrastructure that operates independently of hyperscaler control plane risk, AceCloud's cloud infrastructure provides the compute backbone for a resilience architecture that treats hyperscaler failures not as catastrophes, but as events the system was already designed to survive.

Multicloud resilience is not the same as multicloud architecture. One is a diagram. The other is a quarterly tested, actively maintained operational capability and the distance between them is measured in minutes of downtime per year.