The rise of artificial intelligence (AI) in education is nothing short of a paradigm shift. From automated grading systems to generative tools capable of producing essays in seconds, AI is transforming how students learn, how educators teach, and how academic work is evaluated. But this transformation raises a thorny question: is AI eroding the foundations of academic integrity, or is it simply pushing us to redefine what integrity means in a digital age? With platforms offering top-rated assignment support, the line between assistance and misconduct blurs, prompting educators, students, and institutions to grapple with new ethical dilemmas. This exploration seeks to unpack AI’s dual role as both a disruptor and a catalyst for evolution, probing its implications for academic honesty and the future of learning.

The Promise and Peril of AI in Education

AI’s integration into education is both exhilarating and unsettling. Tools like ChatGPT, Grammarly, and specialized platforms for math problem-solving have democratized access to learning resources. Students can now receive instant feedback on their writing, clarify complex concepts, or explore alternative approaches to problem-solving—all with a few clicks. A 2024 study by the Journal of Educational Technology found that 82% of college students used AI tools at least occasionally to support their studies, citing improved efficiency and understanding.

But there’s a shadow side. The same tools that empower learning can be misused. Generative AI can produce essays, code, or even creative works that pass as original, challenging traditional notions of authorship. A 2023 incident at a major U.S. university saw dozens of students flagged for submitting AI-generated assignments, sparking debates over plagiarism detection and punishment. The ease of accessing such tools raises a question: when does legitimate support cross into academic dishonesty? And how do we define integrity when AI can mimic human effort so convincingly?

Academic Integrity: A Shifting Concept

Academic Integrity: A Shifting Concept

Academic integrity has long been anchored in principles of honesty, trust, and accountability. Students are expected to submit original work, cite sources, and demonstrate their learning authentically. Yet, AI complicates these expectations. If a student uses an AI tool to brainstorm ideas, is that cheating? What if they use it to polish their prose or generate a first draft? The boundaries are murky, and institutions are struggling to keep up.

Historically, plagiarism was easier to define: copying text without attribution was a clear violation. But AI-generated content often lacks a direct source to cite, and tools like paraphrasing algorithms can obscure origins further. Turnitin, a leading plagiarism detection software, reported in 2025 that its AI-detection capabilities flagged 15% of submitted assignments as potentially AI-generated, but only half resulted in disciplinary action due to ambiguous policies. This uncertainty underscores a critical point: academic integrity isn’t static. It evolves with technology, and AI is forcing us to rethink what constitutes “original” work.

Let’s reflect. Is the issue AI itself, or our failure to adapt? Perhaps the problem lies in clinging to outdated assessment models that prioritize product over process. If a student uses AI to generate an essay but learns nothing, integrity is compromised. But if they use it as a tool to deepen understanding—say, by analyzing AI-generated drafts and refining them—could that be a legitimate evolution of learning? The distinction hinges on intent and transparency, yet few institutions have clear guidelines on AI use.

The Role of AI in Supporting Learning

The Role of AI in Supporting Learning

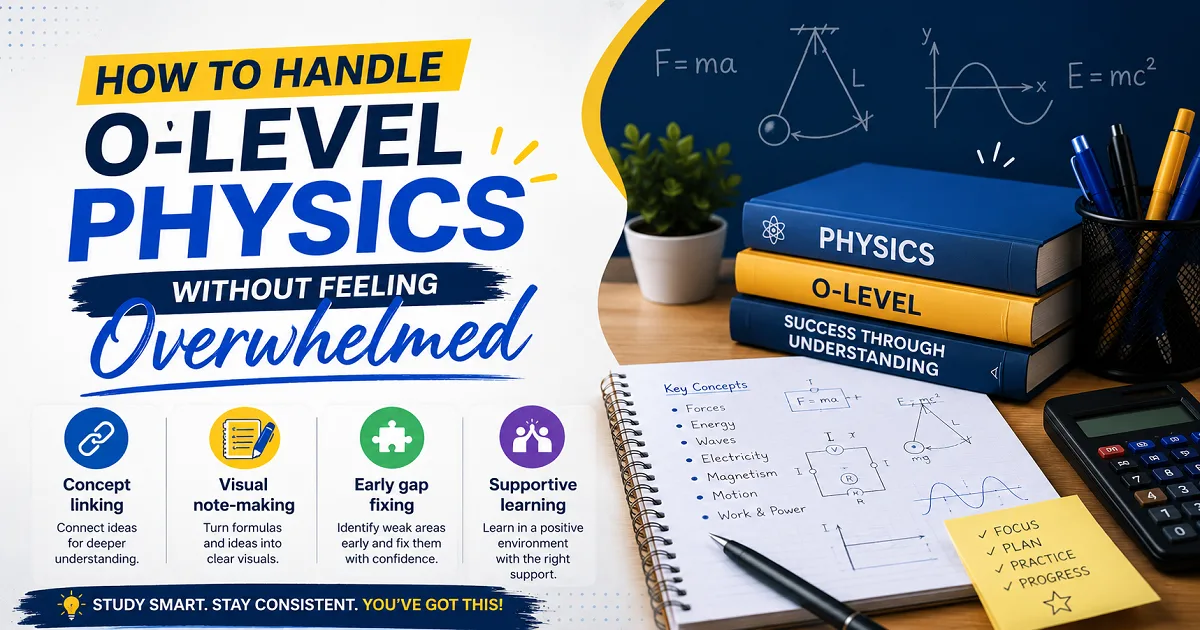

AI’s potential to enhance learning is immense. Adaptive platforms like Khan Academy tailor content to individual needs, while tools like Wolfram Alpha help students break down complex problems step-by-step. In writing, AI can serve as a virtual tutor, offering suggestions on structure, clarity, or tone. For non-native English speakers, tools like Grammarly or DeepL can level the playing field, enabling them to express ideas more confidently.

Consider the case of assignment support platforms. These services, often powered by AI, provide feedback, outlines, or even model answers to guide students. A 2024 survey by EdTech Review found that 65% of students using such platforms felt more confident in their work, particularly in high-stakes assignments. But here’s the rub: where does “support” end and “outsourcing” begin? If a student submits an AI-polished essay without disclosing its use, is that dishonest? Or is it simply leveraging available tools, much like using a calculator in math?

This tension highlights a broader issue: AI doesn’t exist in a vacuum. Its impact depends on how it’s used and regulated. Institutions that ban AI outright risk stifling innovation, while those that embrace it without boundaries invite abuse. A middle path—clear policies on permissible AI use, coupled with assessments that prioritize critical thinking—seems necessary. But crafting such policies is no small feat.

The Challenges of Detecting AI Misuse

The Challenges of Detecting AI Misuse

Detecting AI-generated content is a cat-and-mouse game. Plagiarism detection tools have evolved to include AI-specific algorithms, but they’re not foolproof. A 2025 study by the British Educational Research Journal found that advanced AI models could evade detection in 30% of cases by mimicking human writing styles. Meanwhile, false positives—flagging human work as AI-generated—can unfairly penalize students, eroding trust.

Human oversight remains critical. Educators can often spot AI-generated work through inconsistencies in voice, overly polished prose, or factual inaccuracies that slip through algorithms. But this requires time and expertise, resources stretched thin in large classes or online courses. Moreover, punishing AI misuse is tricky. Should a student face the same consequences for using AI to write an entire essay as for using it to brainstorm? Most institutions lack nuanced policies, defaulting to blanket bans or harsh penalties.

This raises a philosophical question: is the goal of education to police behavior or foster learning? If a student uses AI unethically but masters the material, have they cheated the system or themselves? Conversely, if they avoid AI but struggle to learn, have they upheld integrity at the cost of growth? These paradoxes suggest that AI isn’t just challenging integrity—it’s forcing us to clarify our educational priorities.

Evolving Assessment to Meet the AI Challenge

Evolving Assessment to Meet the AI Challenge

If AI is here to stay, assessments must evolve. Traditional essays, easily generated by AI, may no longer suffice as measures of learning. Instead, educators are exploring alternatives that emphasize process, creativity, and critical thinking. For example:

- Reflective Journals: Asking students to document their learning process, including how they used (or didn’t use) AI, shifts focus to metacognition.

- Oral Exams: Live discussions test understanding in ways AI can’t replicate, though they’re resource-intensive.

- Project-Based Learning: Complex, multi-step projects require synthesis and application, making AI a tool rather than a crutch.

- Peer Review: Collaborative evaluation fosters accountability and reduces reliance on automated outputs.

A 2025 pilot at the University of Melbourne found that project-based assessments reduced AI misuse by 40% compared to essay-based courses, as students engaged more deeply with material. But these methods aren’t universal fixes. They require training, time, and institutional support—luxuries not all educators have.

Another approach is embracing AI within assessments. Some instructors ask students to critique AI-generated work, comparing it to their own or identifying its limitations. This not only demystifies AI but also teaches critical evaluation skills. Yet, this assumes educators are AI-literate, a gap highlighted by a 2024 OECD report noting that 60% of teachers felt unprepared to integrate AI into teaching.

Ethical Considerations and the Role of Policy

Ethical Considerations and the Role of Policy

AI’s impact on academic integrity extends beyond classrooms to broader ethical questions. Who owns AI-generated work? If a student uses an AI tool trained on copyrighted material, are they complicit in intellectual theft? And what about equity? Students in low-resource settings may lack access to premium AI tools, creating a new digital divide. A 2025 UNESCO report warned that unchecked AI use could exacerbate educational inequalities, particularly in developing nations.

Institutional policies are lagging. Many universities still rely on pre-AI honor codes, ill-equipped for nuanced scenarios. Clear guidelines—specifying when AI use is permissible, requiring disclosure, and outlining consequences—are essential. Some institutions, like the University of London, have introduced “AI transparency statements,” where students declare their use of tools. Early data suggests this fosters honesty without stifling innovation.

Services like educational writing services also play a role, offering ethical support while navigating gray areas. But regulation is needed to prevent predatory platforms that prioritize profit over integrity. Governments and accreditation bodies could step in, setting standards for AI use in education.

Conclusion: Evolution, Not Erosion

Is AI reshaping academic integrity or forcing us to evolve? The answer lies in both. AI challenges traditional notions of honesty by blurring lines between support and misconduct, but it also offers opportunities to rethink education. By shifting focus from policing to learning, embracing innovative assessments, and crafting clear policies, we can harness AI’s potential without sacrificing integrity.

This evolution won’t be easy. It demands investment in teacher training, equitable access to technology, and a willingness to question long-held assumptions. But the stakes are high. If we fail to adapt, we risk alienating students and undermining trust in education. If we succeed, we can create a system that values both innovation and integrity—a system where AI is a partner, not a threat.