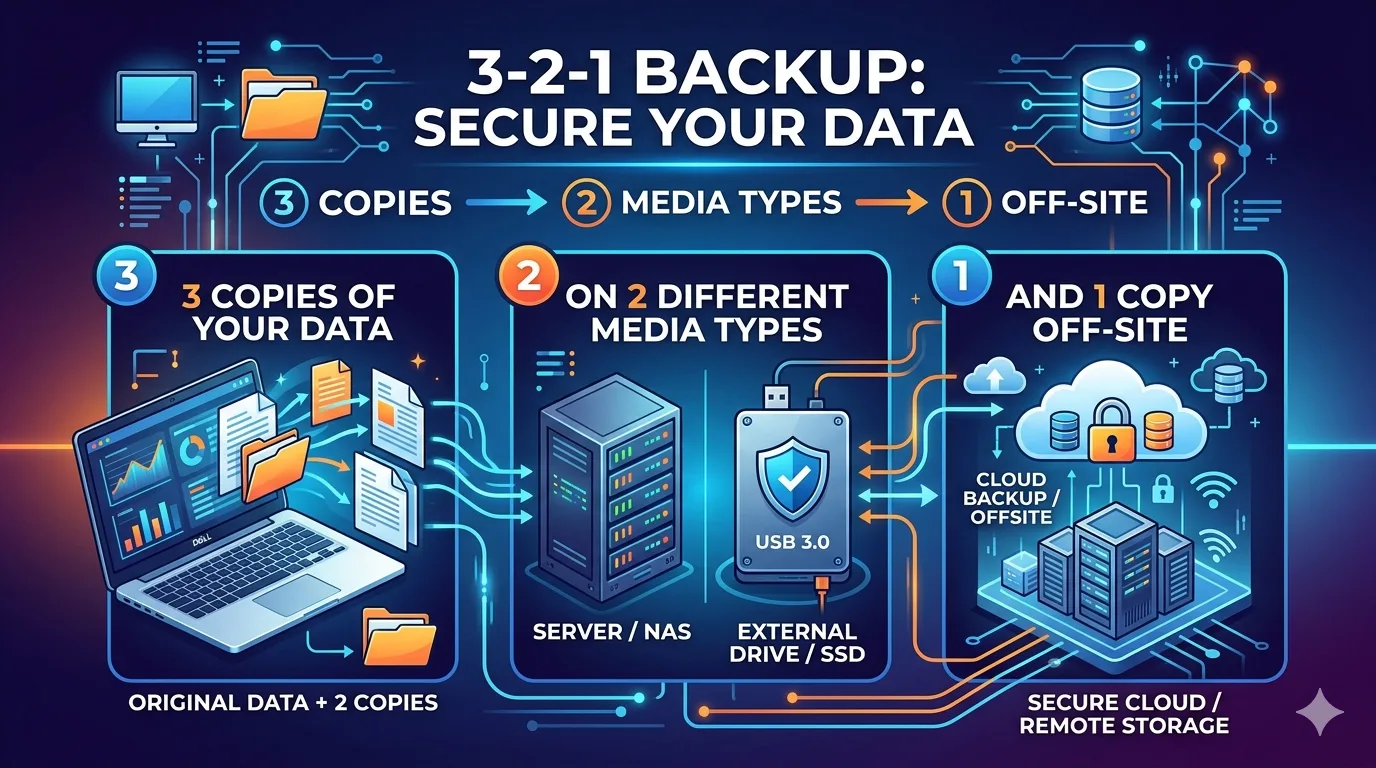

Data loss is a persistent threat in any digital environment, stemming from hardware degradation, malicious ransomware payloads, or administrative errors. To guarantee data resilience and minimize downtime, systems administrators and technology enthusiasts rely on a foundational framework: the 3-2-1 backup rule. Deploying this architecture ensures high availability and comprehensive fault tolerance across your infrastructure.

Reading this guide will equip you with a precise understanding of the 3-2-1 backup methodology, the mechanics behind its structural components, and practical deployment strategies for both local and enterprise-grade networks.

Deconstructing the 3-2-1 Architecture

The 3-2-1 backup methodology operates on a mathematical approach to risk mitigation. By distributing data across multiple vectors, you systematically eliminate single points of failure.

Maintain 3 Copies of Your Data

Total reliance on a single primary dataset is a severe operational vulnerability. The framework dictates maintaining at least three distinct copies of your data. This includes the primary production data and two supplementary backups. Statistically, the probability of simultaneous catastrophic failure across three independent datasets is infinitesimally small.

Utilize 2 Different Storage Media

Storing all backup iterations on identical hardware architectures exposes your data to common-mode failures. If a specific firmware bug or environmental factor compromises a storage array, mirrored drives within that same array remain vulnerable. To isolate this risk, utilize at least two different storage protocols or media types. A standard configuration might involve a local Network Attached Storage (NAS) array using mechanical HDDs, paired with a solid-state drive (SSD) array or magnetic tape storage.

Secure 1 Offsite Copy

Local redundancy fails during site-wide disasters, such as fires, floods, or localized power grid surges. To secure your data against physical perimeter breaches, one of your backup instances must reside in an offsite location. This can be achieved through asynchronous replication to a secondary physical data center or by utilizing secure cloud object storage.

The Imperative for the 3-2-1 Framework

System uptime and data integrity are non-negotiable metrics in technology environments. The 3-2-1 rule provides a robust defense mechanism against volatile digital threats.

Mitigation of Data Loss Scenarios

Ransomware operators explicitly target local backups to prevent recovery efforts, forcing organizations to pay decryption fees. An air-gapped or immutable offsite copy neutralizes this threat vector. Furthermore, hardware degradation—such as unexpected sector failure on mechanical drives or SSD controller degradation—is easily bypassed when alternative media formats are actively maintained.

Optimizing Disaster Recovery

In disaster recovery protocols, Recovery Time Objectives (RTO) and Recovery Point Objectives (RPO) dictate how quickly operations must resume after an outage. Having a local backup ensures a rapid RTO for standard file restorations. Simultaneously, the offsite backup guarantees a reliable RPO during catastrophic site failures, allowing infrastructure engineers to rebuild environments precisely as they existed prior to the event.

Executing the Strategy for Individual Power Users

Technology enthusiasts can deploy the 3-2-1 rule without enterprise budgets. A standard personal implementation involves localized hardware and consumer-tier cloud access.

Your primary workstation holds the production data. Your first backup target should be an automated local solution, such as an external NVMe SSD or a local NAS running a RAID configuration. This satisfies the requirement for a second copy and a different media type. Finally, route encrypted backups to a cloud storage provider like AWS S3, Backblaze B2, or Google Cloud Storage. This automated synchronization ensures the third copy remains safely offsite.

Scaling the Architecture for Enterprise Environments

Business networks demand a more sophisticated integration of the 3-2-1 protocol, focusing heavily on automation, encryption, and compliance.

Enterprise deployments typically utilize Storage Area Networks (SAN) for primary production data. The initial backup tier is often replicated to a secondary, dedicated backup server running deduplication software. To satisfy the distinct media and offsite requirements, organizations implement automated tape libraries or configure continuous data protection (CDP) streams to cold cloud storage tiers, such as Amazon Glacier. Furthermore, enterprise administrators must enforce immutable storage policies to prevent malicious modification of archival data by compromised service accounts.

Achieving Total Data Assurance

Maintaining absolute data integrity requires moving beyond ad-hoc file copying and implementing a systematic, redundant architecture. The 3-2-1 backup rule provides a measurable, mathematically sound defense against hardware failure, cyber threats, and site-wide disasters.

To fortify your data infrastructure, conduct an immediate audit of your current storage topology. Identify missing storage vectors, automate your backup pipelines, and verify the integrity of your offsite encryption keys to ensure your data remains accessible precisely when you need it most with managed backup.