In the history of scientific progress, the process of discovery has usually involved a lot of trial and error: hypothesize, synthesize, test, and repeat. This empirical approach has produced significant results, yet it is frequently hindered by temporal, financial, and intuitive limitations. Today, we are on the verge of a new era in scientific research, thanks to generative artificial intelligence. This game-changing technology is not only speeding up the pace of discovery; it is also changing the way we think about scientific innovation in fields as diverse as molecular design and materials science.

The New Scientific Method: From Analysis to Generation

Traditional computational methods in science have predominantly been discriminative, concentrating on classification and prediction. These models can help you figure out things like "Will this compound stop a certain enzyme?" or "Does this material have the right amount of tensile strength?" This method is useful, but it is still reactive and only tests existing ideas.

Generative models signify a transformative paradigm. They give us the tools to ask proactive, creative questions:

- "Create a new molecule that only affects this cancer pathway."

- "Suggest a material architecture that stores as much energy as possible while keeping the weight down."

- "Create possible catalysts that make this chemical reaction work better."

This change makes scientists the architects of discovery, helping AI systems find things that humans can't even imagine.

The Architectures Powering Discovery

Several generative architectures have proven particularly potent in scientific domains:

Variational Autoencoders (VAEs) be very good at learning structured, continuous latent representations of scientific data. A VAE trained on known molecular structures can create new compounds by sampling from this latent space. This makes it easy to explore chemical neighborhoods with the properties you want.

Generative Adversarial Networks (GANs) have been changed to make realistic molecular structures and material arrangements. The adversarial training process makes sure that the candidates that are generated are statistically the same as valid, high-performing examples in the training data.

Diffusion Models have become very useful tools that slowly turn random noise into complicated, valid structures through a learned denoising process. Their high-quality samples make them great for finding new and different candidates in many scientific fields.

Graph Neural Networks (GNNs) give a natural way for molecules to be made, and natively handle the graph structure of chemical compounds, where atoms are nodes and bonds are edges.

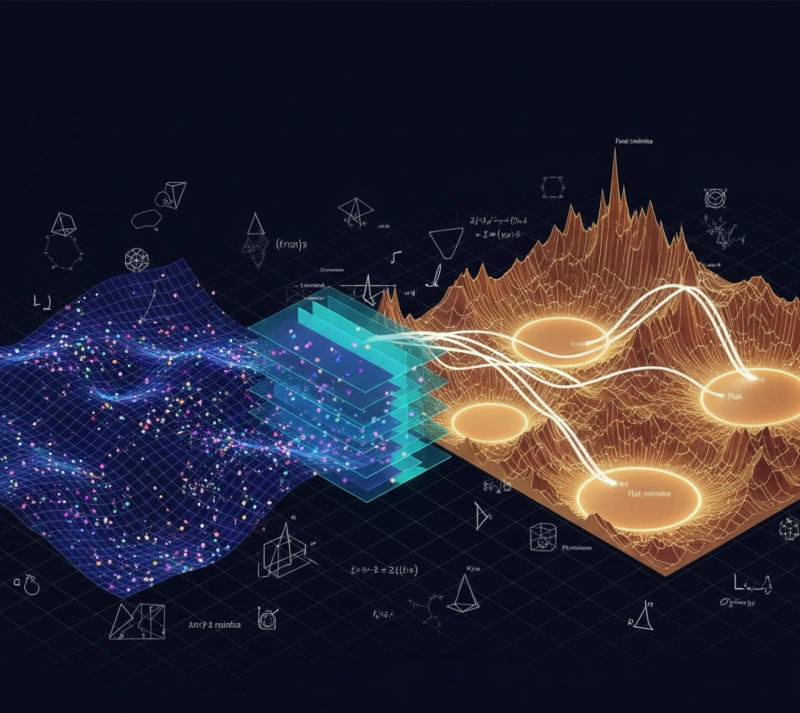

The Generative Discovery Pipeline

The integration of generative models establishes a powerful, iterative discovery cycle:

- AI-Driven Design: Models trained on huge collections of scientific data, like chemical databases and materials libraries, come up with millions of possible structures.

- In-Silico Screening: High-throughput computational simulations, including Density Functional Theory (DFT) calculations, swiftly assess generated candidates for stability, characteristics, and efficacy.

- Laboratory Validation: The best candidates move on to synthesis and testing in the lab.

- Feedback Integration: Results from wet-lab experiments help improve models, which leads to a cycle of improvement.

This pipeline can cut the time it takes to find a solution from years to months, while greatly increasing the number of possible solutions.

Transformative Applications

Accelerated Drug Discovery: Researchers in the pharmaceutical field are using generative models to come up with new drug candidates, especially for targets that have been hard to reach with traditional methods. The ability to look at a lot of different chemicals has led to promising compounds for diseases like antibiotic-resistant infections and neurodegenerative disorders.

Materials Informatics Revolution: Generative models are suggesting new alloys, battery parts, and photovoltaic materials with the best properties in the field of materials science. Using this method, scientists have found new high-temperature superconductors and catalytic materials that work better..

Sustainable Technology Development: The climate crisis calls for quick progress in green technologies. Generative models are creating new catalysts for sustainable chemical processes, better materials for capturing carbon, and more efficient architectures for solar cells.

Challenges and Future Frontiers

Despite remarkable progress, significant challenges remain:

Synthesizability:A generated structure must be practically synthesizable using available methods and resources. The integration of synthetic feasibility predictions continues to be a dynamic field of research.

Explainability: The "black box" quality of complex generative models can make it hard to understand why certain structures are suggested. Creating generative AI that can be understood is important for science to be trusted and for new ideas to come to light..

Data Quality and Bias: The quality and variety of the training data have a big effect on how well the model works. Biases present in existing scientific literature can persist in generated outputs.

Multi-Objective Optimization: In the real world, applications often need to balance several goals that may not always work together, like effectiveness, safety, and ease of manufacturing.

Future research will concentrate on integrating physical laws directly into model architectures, formulating active learning strategies for optimal experimental design, and constructing cohesive models that encompass multiple scientific domains.

Conclusion: The Age of AI-Augmented Science

Generative models for scientific discovery are more than just a technological advance; they mark a major change in how we interact with the natural world. We are moving from being observers and analysts to being active builders of matter and biological function.

This new way of thinking doesn't replace human scientists; it makes their creativity and intuition stronger. Combining AI's ability to explore complex spaces that are beyond our cognitive limits with human expertise could help us solve some of the biggest problems facing humanity, such as curing diseases and dealing with climate change, faster and more effectively than ever before.

The future of scientific discovery does not depend on choosing between human and artificial intelligence. Instead, it depends on using the powerful synergy between the two to write the next chapter in human progress.